As with every technological advancement, generative artificial intelligence tools like OpenAI's ChatGPT, image generator Midjourney or Claude, the chatbot created by AI startup Anthropic, are used for productivity and creation as well as increasingly for scams and abuse.

Among this new wave of malicious content, deepfakes are especially noteworthy.

These artificially generated audiovisual content pieces include voter scams via impersonating politicians or the creation of nonconsensual pornographic imagery of celebrities.

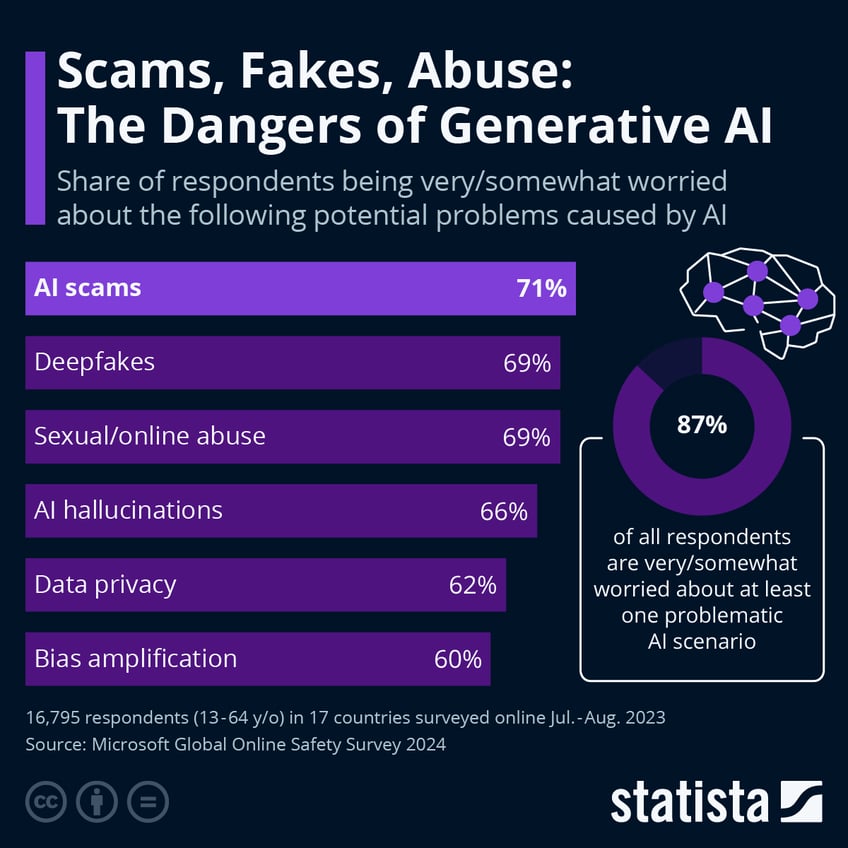

However, as Statista's Florian Zandt reports, a recent survey by Microsoft shows, fakes, scams and abuse are what online users worldwide are most worried about.

71 percent of respondents across 17 countries surveyed by Microsoft in July and August 2023 were very or somewhat worried about AI-assisted scams.

You will find more infographics at Statista

Without further clarifying what constitutes this kind of scam, it is most likely connected to the impersonation of a person in the public eye, a government official or a close acquaintance of the respondents.

Tying for second are deepfakes and sexual or online abuse with 69 percent.

AI hallucinations, which are defined as chatbots like ChatGPT presenting nonsensical answers as facts due to issues with the training material, come in fourth, while data privacy concerns, which are related to large language models being trained on publicly available data of users without their explicit consent, takes fifth place with 62 percent. Overall, 87 percent of respondents were worried at least somewhat about one problematic AI scenario.

Despite the huge market for artificial intelligence - estimated to be between $300 and $550 billion in 2024 by various sources - the survey results indicate that ignoring its potential pitfalls and dangers could prove detrimental to society at large. This is especially true in sensitive areas like politics. With the U.S. presidential elections looming in the fall, the social media landscape is bound to be rife with artificially generated mis- and disinformation.

At a recent hearing on the topic of deepfakes and AI used in election cycles, the CEO of deepfake detection company Reality Defender, Ben Colman, praised some aspects of generative AI while highlighting its dangers as well:

"I cannot sit here and list every single malicious and dangerous use of deepfakes that has been unleashed on Americans, nor can I name the many ways in which they can negatively impact the world and erode major facets of society and democracy", said Colman.

"I am, after all, only allotted five minutes. What I can do is sound the alarm on the impacts deepfakes can have not just on democracy, but America as a whole."

Will this new path for mis-, dis-, mal-information simply become 'regulated' to fit with the government's definitions of 'truth'... "for our own good?"